I asked ChatGPT if I should drive or walk to the car wash to get my car washed — and it struggled with basic logic

If AI can’t solve a basic real-world problem, can it truly be trusted with bigger decisions?

Artificial intelligence is advancing rapidly, and many tasks that once required human judgment are now supported, or in some cases entirely taken over, by AI. These tools are increasingly relied upon for everyday decision-making, from financial planning to route optimisation. But is the technology mature enough to solve even basic, real-world problems?

To explore that question, I set up a simple test designed to examine how AI interprets practical scenarios and applies logic when context matters.

The question

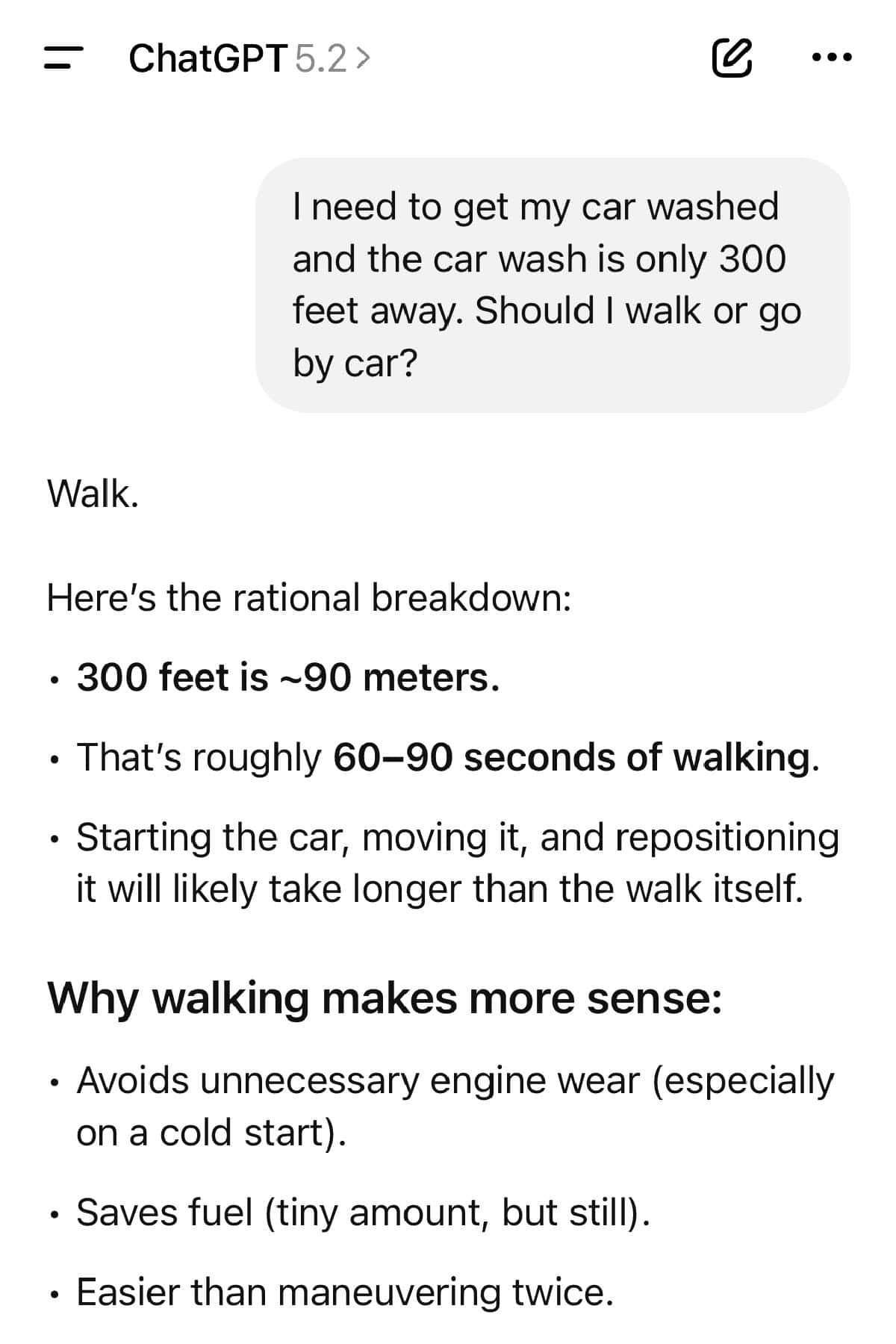

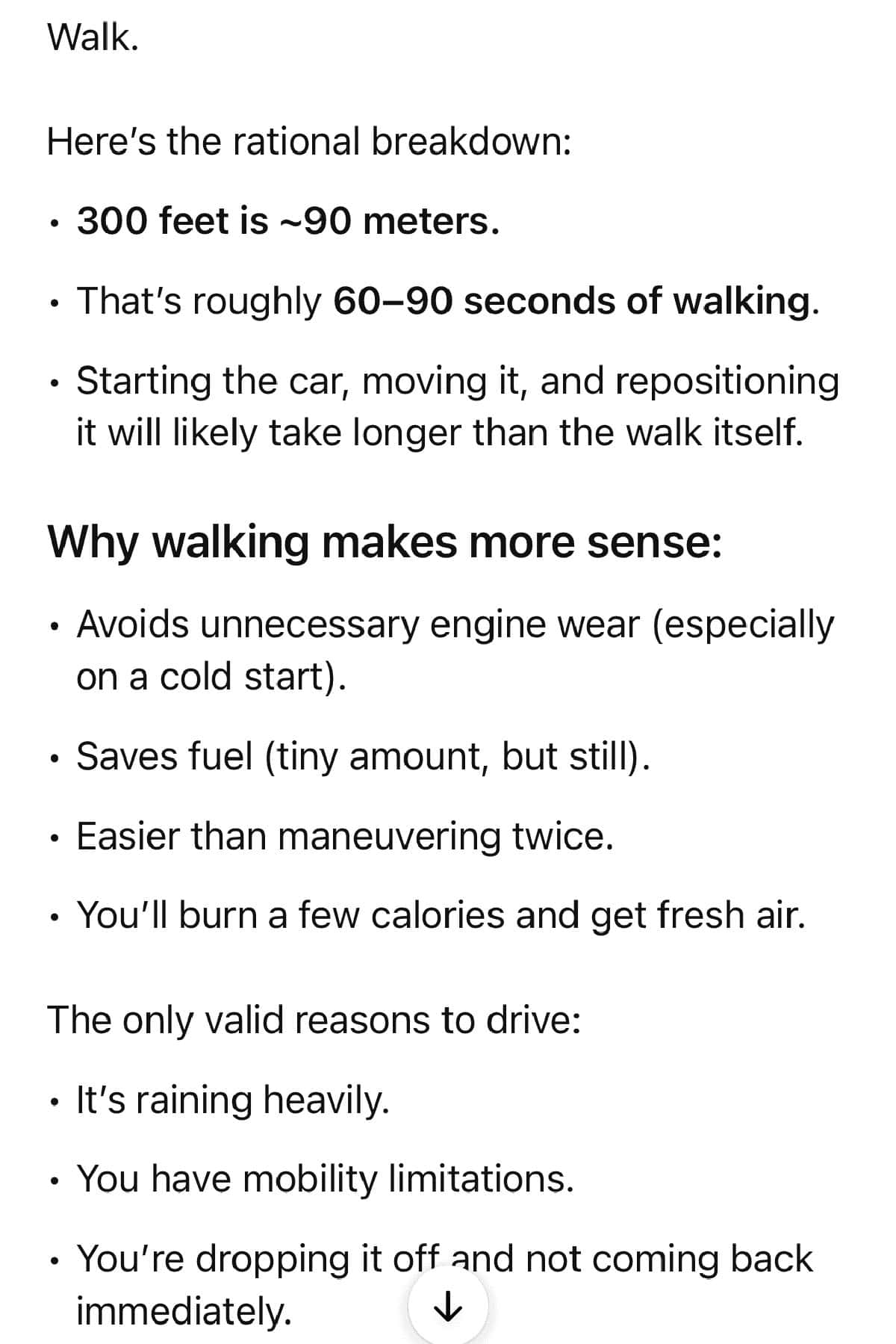

The question was intentionally simple: “I need to get my car washed and the car wash is only 300 feet away. Should I walk or go by car?”

The purpose was not to seek advice on transportation but to examine how AI interprets context. Would it analyse the full logistical requirement of the situation? Or would it treat the question purely as a distance-based efficiency calculation? On its surface, it was a basic question. But basic questions often expose how systems interpret underlying assumptions. 300 feet is close enough to walk in seconds, and in the real world, it would not even be a question of driving or walking.

The answer

The short answer was: Walk. ChatGPT explained that 300 feet is roughly a one-minute walk. It reasoned that starting the car, driving such a short distance, and parking right takes more time. The response also referenced fuel efficiency, unnecessary engine wear, and even the added benefit of light physical activity.

On the surface, the answer sounded logical; it was structured clearly and presented as practical advice grounded in efficiency, and using a car for short distances is the best way to damage your vehicle.

But still, the answer was all wrong.

The response failed to recognize the task’s most fundamental requirement: the car must be physically present at the car wash. Walking there without the vehicle would make the entire trip pointless. The AI evaluated distance, time, and energy expenditure but overlooked the core logistical necessity in the question.

This wasn’t a nuance. It was the core of the problem.

What went wrong

The issue was not a misunderstanding of walking distance or fuel usage. Instead, the question was interpreted as a theoretical efficiency comparison rather than a real-world scenario involving the transportation of an object.

The system analyzed variables such as time and effort, but did not account for the broader context that the car was the subject requiring movement. The reasoning process is optimised for efficiency while ignoring the primary objective.

In practical terms, it solved the wrong problem.

What this reveals about AI’s limits

This example highlights a bigger issue that often gets overlooked in conversations about artificial intelligence replacing human work.

AI systems are good at pattern recognition and surface-level reasoning. They are far less reliable when it comes to situational awareness. AI doesn’t understand context or real-world constraints unless they’re explicitly spelled out.

Humans don’t need to be told that a car wash requires a car. That understanding comes from lived experience, not data processing. It’s the kind of knowledge that feels too obvious to mention, which is exactly why AI struggles with it.

If an AI system can’t recognize that walking to a car wash without a car defeats the purpose, its ability to independently handle complex responsibilities is limited. Many jobs rely not just on efficiency, but on spotting missing information and knowing when a question itself doesn’t make sense.

Even though AI technology is improving quickly, many experts question whether the rapid growth is sustainable in the long run. As of today, OpenAI, the company behind ChatGPT, is struggling to generate profit and is burning money at an alarming rate. Experts believe that further improving AI capability will require significantly more resources.

Confidence is not competence

One of the most interesting parts of the interaction wasn’t the mistake itself, but how confidently it was delivered. The answer sounded reasonable enough that it could easily pass without scrutiny, especially if the reader assumed the AI had already considered all relevant factors.

That’s where the risk lies. AI doesn’t signal uncertainty unless prompted. It doesn’t raise its hand and say something might be missing. It offers answers, not judgment.

Humans, by contrast, adjust based on unstated realities. We notice when a solution won’t work, even if we can’t immediately articulate why.

The takeaway

This wasn’t a sophisticated test or a trick question. It was a simple scenario that exposed a fundamental weakness. ChatGPT failed because it lacks intelligence in the traditional sense. It failed because it lacks lived understanding. It can process information, but it doesn’t grasp situations. And until that gap is addressed, claims that AI can replace human decision-making should be treated with caution.

Sometimes the problem isn’t that humans overthink small decisions. It’s that we’re too quick to trust systems that sound confident, even when they don’t understand the basics. In this case, the smartest choice wasn’t walking or driving. It was recognizing that the tool giving advice didn’t understand the task at all.